Artificial intelligence has moved from pilot to production. Enterprises are now running AI across customer support, sales, engineering, legal, and HR — often simultaneously, often with different tools, and often without IT’s knowledge.

When 80% of employees use AI tools outside approved systems — a pattern IBM now calls “shadow AI” — organizations face real exposure: data leaks, compliance violations, cost sprawl, and zero visibility into what their AI is actually doing.

AI governance is the answer. Not as a policy binder on a shelf, but as an enforcement layer built into how AI is used every day.

This guide covers everything you need to know: what AI governance means, why it matters right now, how an AI governance platform works, and what to look for when choosing one.

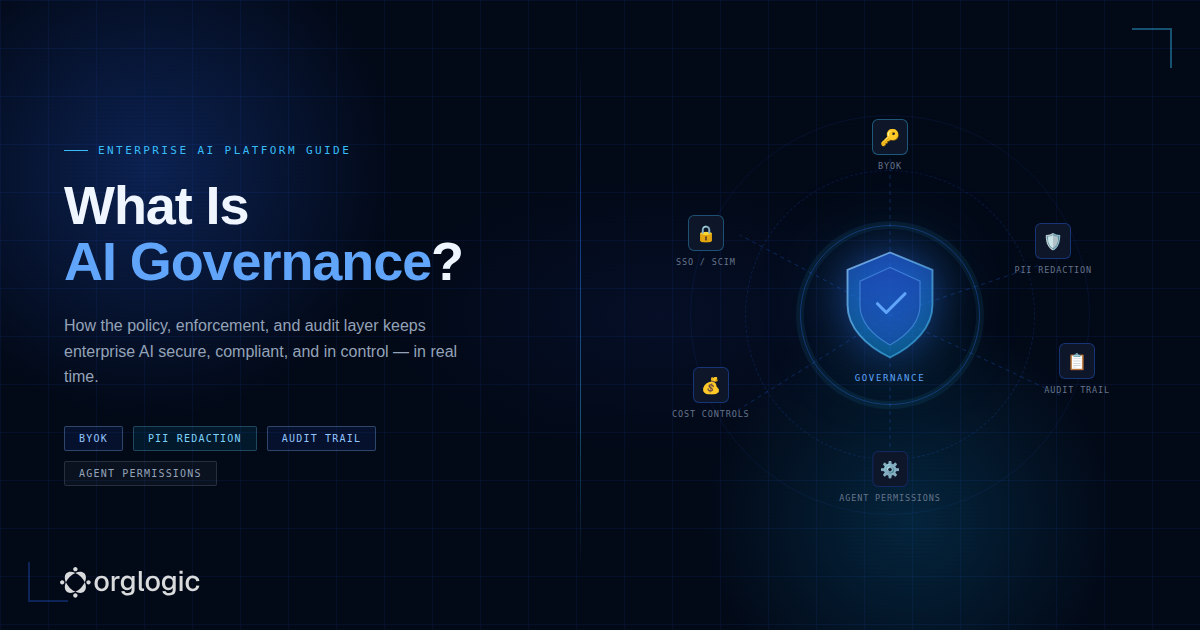

Quick answer: AI governance is the set of policies, controls, and enforcement mechanisms that determine who can use AI, what they can do with it, and how every interaction is logged and audited. A modern AI governance platform enforces these rules in real time — before data reaches a model, not after.

In this guide:

AI governance refers to the frameworks, processes, tools, and controls that ensure AI systems are used responsibly, securely, and in compliance with organizational policies and applicable regulations.

In practice, it answers three operational questions:

Most organizations can’t answer any of these today. That gap is exactly what AI governance closes.

It’s worth distinguishing governance from compliance. Compliance means meeting a regulatory requirement. Governance means building the infrastructure to meet it — and to keep meeting it as AI usage scales. You need governance to achieve compliance; you can’t get there with documents alone.

The case for AI governance has gone from theoretical to operational. Here’s what’s driving it:

Research consistently shows that 70–80% of employees use AI tools that haven’t been approved by IT or security. They’re using personal ChatGPT accounts, browser extensions, and consumer AI tools — and pasting in customer data, proprietary code, financial records, and confidential strategy documents.

This isn’t negligence. It’s productivity-seeking behavior in the absence of approved tools. The solution isn’t blocking AI. It’s giving employees governed AI that works better than what they’d find on their own.

The EU AI Act is now in phased enforcement. The SEC has issued guidance on AI use in financial services. HIPAA-covered entities are under increased scrutiny for AI-related data handling. ISO 42001 — the international standard for AI management systems — is now a procurement requirement for many enterprise contracts.

Boards and CISOs are being asked directly: “How do we govern our AI?” Organizations that can’t answer will face both regulatory and reputational consequences.

First-generation AI governance was about chat: controlling what employees typed into a chat window. That problem is hard enough.

Now enterprises are deploying AI agents — autonomous systems that take actions in Salesforce, Jira, ServiceNow, and GitHub. An agent that can create Jira tickets, update Salesforce records, and post to Slack is not just answering questions. It’s making changes.

Governing agent actions — what each agent can access, what it can do, and what it did — requires a new level of precision that most platforms don’t yet offer.

Learn more about: Best AI Support Agents in 2026: Best Tools for Enterprise Support

IBM’s 2025 Cost of a Data Breach report puts the average extra cost of a shadow AI-related breach at $670,000. Fragmented AI tool subscriptions cost enterprises an estimated $3,200 per employee per year in redundant, ungoverned spending. These are operational costs, not hypothetical risks.

The most useful way to understand a modern AI governance platform is through three layers: policy, execution, and audit. Most legacy approaches only cover the first and third. The execution layer — where actual enforcement happens — is what separates governance from documentation.

This is where governance starts. Organizations define the rules that will govern AI usage across the enterprise:

Good policy tools let administrators set these rules centrally and apply them across every surface where AI is used — web app, Slack bot, Teams integration, API, and browser extension.

This is the layer most governance tools miss. Policies written in a document don’t prevent a PII leak. Policies enforced at the point of interaction do.

In a well-designed AI governance platform, every interaction is evaluated before it reaches a model:

If a rule is violated, the platform can block the request, redact sensitive content, flag it for review, or log it silently depending on severity. This happens before the data leaves the organization.

Example: An employee pastes a customer’s SSN into a prompt. The governance platform detects the PII pattern, redacts it before the request is sent to the model, and logs the event with the user, timestamp, and original content. The employee gets a response. The SSN never leaves the building.

After every interaction, the platform logs a complete, searchable record:

These logs serve compliance audits, security investigations, cost attribution, and operational analytics. For AI agents specifically, the audit trail should show not just what was asked but what actions the agent took in connected systems.

Not all governance platforms are built the same. Here’s what to look for:

Every chat and every agent action logged, searchable, and exportable. Should include user identity, agent identity, model used, timestamp, connector accessed (for agents), and policy events triggered. Audit logs should be tamper-evident and exportable for compliance review.

AI agents connected to enterprise systems (Salesforce, Jira, ServiceNow, GitHub) should operate with scoped permissions — not blanket access to everything a connector can reach. A Deal Prep agent in Salesforce should be able to read account and opportunity data, but not delete records or access HR data. Permissions should be revocable instantly.

Administrators should be able to control which AI models are available to which teams. Some teams may be permitted to use frontier models like GPT-4.1 or Claude Sonnet for complex tasks; others may be restricted to faster, cheaper models. This control should operate at the workspace, team, and agent level.

AI usage costs money. A governance platform should let administrators set spending limits per team, per user, and per agent — with alerts before limits are hit and hard caps when they are. Real-time cost dashboards broken down by team, model, and agent are essential for IT and finance.

Enterprise AI governance requires integration with identity providers. SSO (SAML, Okta, Azure AD) means users authenticate through your existing IDP — no separate credential management. SCIM means user provisioning and deprovisioning are automated. When an employee leaves, their AI access is revoked automatically.

Standard audit trails log chat interactions. Agent audit trails go further: they record which systems an agent accessed, what data it read or wrote, and what actions it took — with full attribution to the user who initiated it. This is the governance layer that makes AI agents enterprise-deployable.

BYOK means the organization connects its own API keys to the underlying AI models (OpenAI, Anthropic, Google, etc.). Data flows directly from the organization to the model provider — it never touches the governance platform’s infrastructure. This is the strongest available data control short of on-premises deployment.

Some vendors offer BYOK but charge a surcharge for it. The most transparent platforms offer BYOK at zero markup.

Automated detection and redaction of sensitive data patterns before prompts reach AI models. Should cover standard PII (names, emails, SSNs, phone numbers), PHI (medical record numbers, diagnoses), financial data, and should be configurable for organization-specific data patterns. Redaction should happen pre-model, not post-response.

.png)

Regulatory obligations around data handling, model explainability, and audit trails are especially stringent in financial services. AI governance platforms enable banks and insurers to deploy AI for underwriting, claims processing, and customer service while maintaining the audit logs required by SEC, FINRA, and state regulators.

HIPAA compliance requires that PHI not be used in AI prompts without proper authorization and logging. AI governance platforms with pre-model PII/PHI redaction and full audit trails allow healthcare organizations to use AI for clinical documentation, research, and operations without creating compliance exposure.

Development teams are heavy AI users — and they work with proprietary code, architecture diagrams, and security configurations that should never leave the organization. Governance ensures developers can use AI coding assistants and PR review agents without inadvertently exposing IP to third-party model providers.

AI agents connected to CRM systems can dramatically accelerate deal prep, account research, and proposal generation. Governance ensures these agents operate with scoped permissions (read account data, not modify pipeline stages), with full logs of every action for RevOps review.

IT is often the team fielding AI tool requests from every department simultaneously. A governed AI workspace gives IT a single platform to deploy, monitor, and control AI usage across the enterprise — replacing the spreadsheet of approved tools with a single governed environment.

The market is noisy. Here’s a practical evaluation framework:

Ask vendors specifically: “Is your policy enforcement pre-model or post-response?” Pre-model enforcement (blocking before the prompt reaches the AI) is meaningfully stronger than post-response monitoring. If a vendor can’t answer clearly, that’s a red flag.

If you’re deploying or planning to deploy AI agents — and most enterprises are — your governance platform must cover agent actions, not just chat messages. Ask about per-agent permission scoping, agent-level audit trails, and connector access controls.

BYOK is table stakes for enterprise governance. But understand the details: Does the vendor charge a surcharge for BYOK usage? Where does data flow when BYOK is enabled? Is it truly zero-touch on the vendor side, or does the vendor’s infrastructure still see the data?

Governance that applies only at the organization level is insufficient. Look for per-team model controls, per-user spend limits, per-agent connector permissions, and per-workspace PII rules. The more granular the control, the more you can extend AI to sensitive use cases.

Request a demo of the audit log. It should show user identity, agent identity, model used, connector accessed, and the full interaction (subject to redaction). For compliance purposes, ask whether logs are tamper-evident and exportable in formats your auditors can use.

At minimum: SOC 2 Type II and ISO 27001. For healthcare: HIPAA BAA availability. For financial services: ask about SOC 2 Type II report scope. EU-based organizations should ask specifically about data residency and GDPR architecture.

OrgLogic note: OrgLogic is SOC 2 Type II, ISO 27001, HIPAA, and GDPR certified. BYOK is available on all plans at zero surcharge. Governance — including audit trail, PII redaction, and cost controls — is on by default, even on the free tier.

AI governance is a moving target. Here’s where it’s heading:

Early governance tools focused on reviewing logs after the fact. Modern platforms are shifting toward real-time intervention — blocking, redacting, and flagging before interactions complete. This is the direction the market is moving, and it’s where regulatory expectations are heading too.

As enterprises move from AI chat to AI agents, the governance challenge multiplies. An agent that takes 50 actions per hour in production systems creates 50 governance events per hour. The platforms that win will be those that can govern agent actions — with the same precision and auditability as human actions — at scale.

Lear more about: How to Implement AI Support Agents: A Quick Step-by-Step Guide for Enterprises

The best governance is invisible. It’s not a separate compliance tool that slows people down; it’s built into the AI workspace so that governed behavior is the default behavior. When governance is embedded, organizations can extend AI to more sensitive functions without additional risk.

Enterprises are increasingly running multiple models and chaining agents together. Governance platforms will need to handle multi-agent workflows — tracking lineage across chains of agents, attributing actions to originating users, and enforcing policies across model boundaries.

AI governance is not a compliance checkbox. It’s the operational infrastructure that makes enterprise AI deployment possible — the layer that lets organizations give employees access to powerful AI tools without accepting the data, security, and compliance risks that come with uncontrolled adoption.

The organizations getting this right aren’t treating governance as a blocker. They’re using it as an enabler: the thing that lets IT say yes to AI requests instead of no, the thing that lets security sign off on agent deployments, the thing that lets finance understand what AI is actually costing.

If your organization is scaling AI in 2026, governance isn’t optional. It’s foundational.

Single-model AI tools lock you into one provider at $25-60/seat. OrgLogic is a multi-model AI workspace with named Agents that act in your systems (Salesforce, Jira, Confluence, ServiceNow), packaged Skills for domain expertise, and full governance at $8/seat. You get every model, not just one.

Bring Your Own Key means you connect your own API keys from OpenAI, Anthropic, Google, or any provider. Your data flows directly to the model provider. OrgLogic never sees, stores, or processes your prompts or responses. Zero surcharge on your own keys. This is the #1 requirement for security teams evaluating enterprise AI platforms.

An Agent is a named AI worker with a defined job, connected to your systems via Connectors. A Skill is packaged expertise that teaches an Agent how to do specific work consistently. Unlike a generic chatbot, a Deal Prep Agent with a Salesforce Connector pulls real CRM data and produces structured call briefs. Skills are reusable across Agents, versioned, and authored in plain language.

Every Workspace includes per-Agent Connector permissions (each Agent gets scoped access, not blanket access), Agent-level audit trails, automatic PII redaction, per-team budget controls, model-level access controls, and configurable guardrails. Governance is the default environment on every plan, including Free. SOC 2 Type II, ISO 27001, HIPAA, and GDPR compliant.

The Free plan covers 25 users with $500 in credits ($20 per active user, pooled). The Business plan is $8/seat/month (annual) or $10 monthly. The seat fee covers the full platform: Agents, Skills, Connectors, governance dashboard, 5 surfaces, and all features. Model usage is separate: BYOK at zero surcharge, or OrgLogic-managed models at cost + 6%.

80% of employees already use AI tools without IT approval. OrgLogic replaces fragmented, ungoverned tools with one AI workspace employees actually want to use, available on web, Slack, Teams, Chrome, and API. One customer, a regulated tech company with 1,500 employees, reduced shadow AI by 91% within 6 weeks while cutting AI spend by 70%.

OrgLogic Connectors integrate with Salesforce, Jira, Confluence, ServiceNow, SharePoint, Google Workspace, Slack, SAP, and more via custom APIs. Each Connector has per-Agent permission scopes controlled by IT, so your Deal Prep Agent only accesses the Salesforce objects you approve. The Connector library is growing and new integrations ship regularly.

Self-serve signup takes 30 seconds. Connect your API keys in 2 minutes. Deploy pre-built Agents for sales, support, engineering, HR, and legal on day one. The Free plan (25 users, full governance) lets you pilot without procurement. One customer had engineers adopting within 2 weeks across Slack and Chrome. Enterprise plans add SSO/SCIM, VPC, and on-prem deployment.